Galois Finite Fields and the Advanced Encryption Standard (AES)

CS

463/480 Lecture, Dr.

Lawlor

Because every finite field of a given

size is equivalent, any field with 256 elements always has the

same universal properties. Galois, who died at age 20 in the chaos of post-Napoleon France,

blazed the mathematical trail to much of this area, so we call the

field with 256 elements GF(28), or "Galois Field with 28 elements".

Weirdly, members of a field of size pn are

equivalent to polynomials of degree up to n, with coefficients chosen from integers modulop (a "field

with characteristic p"). For example, we might do the obvious thing and

represent numbers like 0 or 1 with the polynomial 0 or 1. So

far, so good, but 2 is problematic because we can only have

coefficients modulo p, so we bump up a power of the polynomial to

x, and pick "2=10=1x+0". This results in a simple

correspondence between the bits in a binary representation of a

number and the terms of the corresponding field polynomial.

Number

|

Binary

|

GF(28)

Polynomial

|

Simplified

|

0

|

0

|

0

|

0

|

1

|

1

|

1

|

1

|

2

|

10

|

1x+0

|

x

|

3

|

11

|

1x+1

|

x+1

|

4

|

100

|

1x2+0x+0

|

x2 |

5

|

101

|

1x2+0x+1 |

x2+1 |

8

|

1000

|

1x3+0x2+0x+0 |

x3 |

16

|

10000

|

1x4+0x3+0x2+0x+0 |

x4 |

21

|

10101

|

1x4+0x3+1x2+0x+1 |

x4+x2+1 |

Luckily, the mathematical structure is

just there to compute the values of the substitution tables, or

motivate some simple shifting and XOR work--we certainly don't

have to "convert numbers to polynomials" at runtime, and we never

actually evaluate the polynomials at any x.

Finite

Field Addition

We can figure out how addition works in

a finite field by adding the underlying polynomials. For

example:

1 + 2 = 1+10 =

(1)+(1x+0) = 1x+1 = 11 = 3

Yet because we only have single-bit

polynomial coefficients,

2 + 2 = 10+10 =

(1x+0)+(1x+0) = 2x = 0x = 0

Because of this single-bit binary

wraparound, it turns out that in GF(28), addition, subtraction, and XOR are

all the same operation. This seems like a pretty radical

departure from ordinary addition, but in a circuit, it just

corresponds to throwing away the carry bits from each single-bit

addition.

Here's an addition table for GF(24), a 4-bit (single hex digit) finite field:

0 1 2 3 4 5 6 7 8 9 a b c d e f

1 0 3 2 5 4 7 6 9 8 b a d c f e

2 3 0 1 6 7 4 5 a b 8 9 e f c d

3 2 1 0 7 6 5 4 b a 9 8 f e d c

4 5 6 7 0 1 2 3 c d e f 8 9 a b

5 4 7 6 1 0 3 2 d c f e 9 8 b a

6 7 4 5 2 3 0 1 e f c d a b 8 9

7 6 5 4 3 2 1 0 f e d c b a 9 8

8 9 a b c d e f 0 1 2 3 4 5 6 7

9 8 b a d c f e 1 0 3 2 5 4 7 6

a b 8 9 e f c d 2 3 0 1 6 7 4 5

b a 9 8 f e d c 3 2 1 0 7 6 5 4

c d e f 8 9 a b 4 5 6 7 0 1 2 3

d c f e 9 8 b a 5 4 7 6 1 0 3 2

e f c d a b 8 9 6 7 4 5 2 3 0 1

f e d c b a 9 8 7 6 5 4 3 2 1 0

(Try this in NetRun now!)

Rather like Sudoku, for inverses to

exist we need each output to appear in each row and column exactly

once.

Finite

Field Multiplication

Multiplication in a finite field works

just like polynomial multiplication (remember Algebra II?), which

means:

2*2=10*10=

(1x+0)*(1x+0)=x*x=x2=1x2+0x+0=100=4

This works fine except for the problem

of generating polynomial degrees higher than n: for example, 16*16=x4*x4=x8, which is just beyond GF(28). To fix this, we "reduce" higher degrees by

subtracting off multiples of a "reducing polynomial", which for

AES isx8 + x4 + x3 + x + 1 (in

hex, 0x11b). There are lots of choices of reducing

polynomial (they need to be "irreducible", the polynomial

equivalent of prime, to work out), but it turns out they only

permute the numbers, not change the underlying mathematical

structure.

In this case, we subtract off the

reducing polynomial once:

16*16=x4*x4=x8=x8-(x8 + x4 + x3 + x + 1)=-(x4 + x3 + x + 1)

Since

every element is its own additive inverse, this is:

...=x4 + x3 + x + 1=11011=27.

Note that an even times an even *can*

give you an odd this way!

The reduction operation gives

multiplication in a finite field a strange flavor. For

example, here's the 256-entry hex multiplication table (from Wikipedia) for multiplying by 2 (1x+0) in GF(28):

0x00,0x02,0x04,0x06,0x08,0x0a,0x0c,0x0e,0x10,0x12,0x14,0x16,0x18,0x1a,0x1c,0x1e,

0x20,0x22,0x24,0x26,0x28,0x2a,0x2c,0x2e,0x30,0x32,0x34,0x36,0x38,0x3a,0x3c,0x3e,

0x40,0x42,0x44,0x46,0x48,0x4a,0x4c,0x4e,0x50,0x52,0x54,0x56,0x58,0x5a,0x5c,0x5e,

0x60,0x62,0x64,0x66,0x68,0x6a,0x6c,0x6e,0x70,0x72,0x74,0x76,0x78,0x7a,0x7c,0x7e,

0x80,0x82,0x84,0x86,0x88,0x8a,0x8c,0x8e,0x90,0x92,0x94,0x96,0x98,0x9a,0x9c,0x9e,

0xa0,0xa2,0xa4,0xa6,0xa8,0xaa,0xac,0xae,0xb0,0xb2,0xb4,0xb6,0xb8,0xba,0xbc,0xbe,

0xc0,0xc2,0xc4,0xc6,0xc8,0xca,0xcc,0xce,0xd0,0xd2,0xd4,0xd6,0xd8,0xda,0xdc,0xde,

0xe0,0xe2,0xe4,0xe6,0xe8,0xea,0xec,0xee,0xf0,0xf2,0xf4,0xf6,0xf8,0xfa,0xfc,0xfe,

0x1b,0x19,0x1f,0x1d,0x13,0x11,0x17,0x15,0x0b,0x09,0x0f,0x0d,0x03,0x01,0x07,0x05,

0x3b,0x39,0x3f,0x3d,0x33,0x31,0x37,0x35,0x2b,0x29,0x2f,0x2d,0x23,0x21,0x27,0x25,

0x5b,0x59,0x5f,0x5d,0x53,0x51,0x57,0x55,0x4b,0x49,0x4f,0x4d,0x43,0x41,0x47,0x45,

0x7b,0x79,0x7f,0x7d,0x73,0x71,0x77,0x75,0x6b,0x69,0x6f,0x6d,0x63,0x61,0x67,0x65,

0x9b,0x99,0x9f,0x9d,0x93,0x91,0x97,0x95,0x8b,0x89,0x8f,0x8d,0x83,0x81,0x87,0x85,

0xbb,0xb9,0xbf,0xbd,0xb3,0xb1,0xb7,0xb5,0xab,0xa9,0xaf,0xad,0xa3,0xa1,0xa7,0xa5,

0xdb,0xd9,0xdf,0xdd,0xd3,0xd1,0xd7,0xd5,0xcb,0xc9,0xcf,0xcd,0xc3,0xc1,0xc7,0xc5,

0xfb,0xf9,0xff,0xfd,0xf3,0xf1,0xf7,0xf5,0xeb,0xe9,0xef,0xed,0xe3,0xe1,0xe7,0xe5

The whole first half of the table is

boringly identical to integer multiplication, but the reduction

starts being needed halfway through, and the results start

bouncing around, especially in the low bits.

Here's a multiplication table for GF(24), using a reduction polynomial of 0x13 == 10011 == x4+x+1. (See some more detailed GF(16) examples here.)

0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

0 1 2 3 4 5 6 7 8 9 a b c d e f

0 2 4 6 8 a c e 3 1 7 5 b 9 f d

0 3 6 5 c f a 9 b 8 d e 7 4 1 2

0 4 8 c 3 7 b f 6 2 e a 5 1 d 9

0 5 a f 7 2 d 8 e b 4 1 9 c 3 6

0 6 c a b d 7 1 5 3 9 f e 8 2 4

0 7 e 9 f 8 1 6 d a 3 4 2 5 c b

0 8 3 b 6 e 5 d c 4 f 7 a 2 9 1

0 9 1 8 2 b 3 a 4 d 5 c 6 f 7 e

0 a 7 d e 4 9 3 f 5 8 2 1 b 6 c

0 b 5 e a 1 f 4 7 c 2 9 d 6 8 3

0 c b 7 5 9 e 2 a 6 1 d f 3 4 8

0 d 9 4 1 c 8 5 2 f b 6 3 e a 7

0 e f 1 d 3 2 c 9 7 6 8 4 a b 5

0 f d 2 9 6 4 b 1 e c 3 8 7 5 a

This is generated by the following code:

// Bitwise ("peasant") multiplication, using galois addition

for (int bit=3;bit>=0;bit--) {

if (b&(1<<bit))

c=c^(a<<bit);

}

// Reduce back into galois field (can also be folded into above loop)

int irr=0x13; // represents reduction polynomial

int high=0x10; // high bit of above

for (int bit=3;bit>=0;bit--) {

if (c&(high<<bit)) // if that bit is set

c=c^(irr<<bit); // galois "subtract" it off

}

(Try

this in NetRun now!)

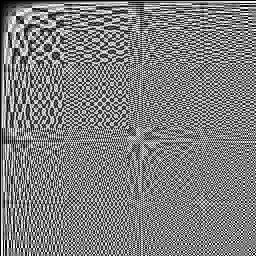

For

GF(2^8), the AES field, this is the multiplication table:

Note that forward

multiplication is just a short algorithm, but it's weirdly

nonlinear enough that division in a finite field is

hard--typically you build a table of multiplicative inverses,

and multiply by the inverse. You can also

perform both multiplication and division efficiently by making a

table of logarithms and antilogarithms. Tables work

for these fields because there are only 256 elements!

AES's

Operations

Each round of AES consists

of these operations, on a 4x4 grid of bytes.

- Each byte in the grid is run through an S-box.

This consists of a multiplicative inverse, followed by an affine

transformation--a series of additions and multiplies by 0 or

1. The affine transformation fixes the problem with 0 (no

multiplicative inverse, so by convention 1/0=0), and messes up

the mathematics so you can't string multiplicative inverses

together.

- ShiftRows shifts each byte horizontally in the 4x4 grid.

Row i is shifted

circularly by i bytes.

- MixColumns computes a linear

transformation on each column. A linear transformation is

just a series of finite field multiplications and additions. The

matrix used is invertible, so there's an inverse

operation. It's also chosen such that every output byte

changes if any single input byte changes.

- Finally, the round

keys are

added (the same operation as XOR in a finite field) to each

byte.

Why

Bother?

The big advantages of working in a

finite field are:

- Numbers stay small--everything in GF(28) is always

one byte, even after multiplication or division.

- Inverses exist for each operation, which makes decryption

possible. This is superior to the simpler modular

arithmetic in a power of two modulus, where multiplying by 2

loses the high bit.

- The mathematics are well understood, dating to the

1830's. This makes it easy for you to pick good mixing

functions, and makes it less likely somebody is going to

surprise you with a new analysis technique.

- There are enough operations for them to combine in rich ways:

AES combines division in the first half of the S box, a

transpose in the row shift, and matrix operations during

MixColumns and the second half of the S box. Matrix

operations don't commute with each other, and the transpose and

division keeps you from multiplying the matrices together.

By contrast, for example, if you've only got addition, you're a

shift cipher no matter how many operations you do; if you've

only got addition and multiplication, you're a linear cipher no

matter how many operations you do.

If you're curious, FIPS

197 has the gory details with examples, and the Rijndael AES paper is

pretty readable, and has details on design motivation.