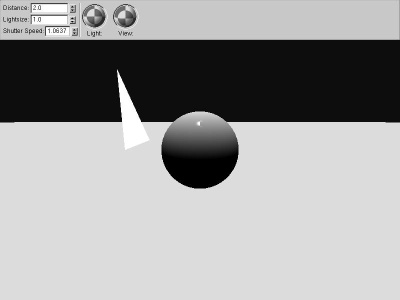

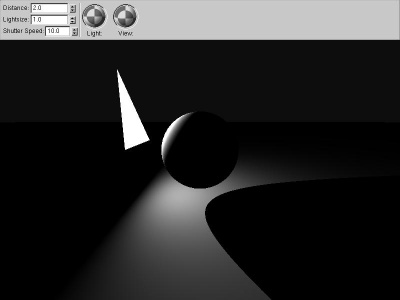

We begin with a classic OpenGL fixed-direction, infinite-distance light source.

|

fixed_angle We begin with a classic OpenGL fixed-direction, infinite-distance light source. |

|

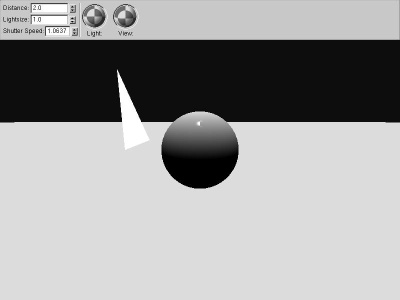

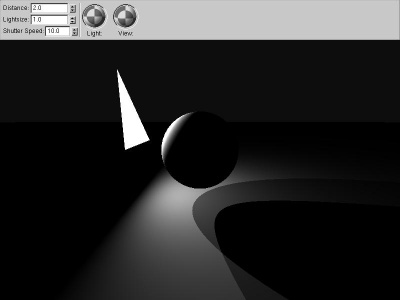

local_angle If we compute the vector pointing to the light source from each pixel, we immediately gain realism. The ground gets darker farther away simply because the vector to the light source is pointing farther away from the normal, not because of any distance-related falloff. |

|

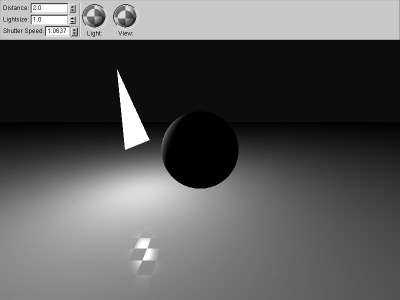

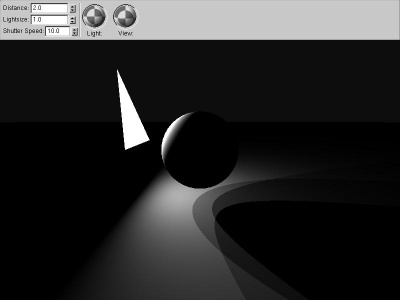

solid_angle If we compute the solid angle to the actual polygon light source, using the classic loop around the vertices (angle-weighted cross products), we get far better local effects, as well as properly handling light sources of varying sizes. |

|

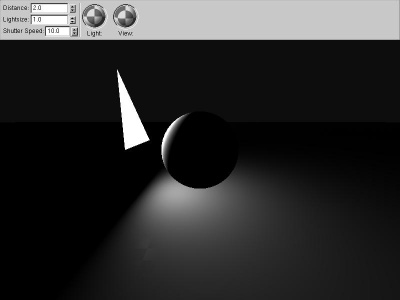

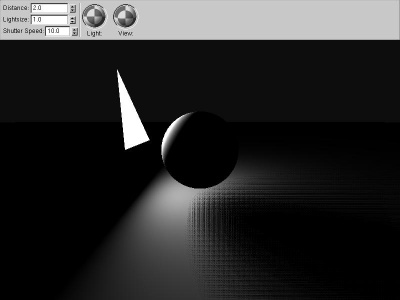

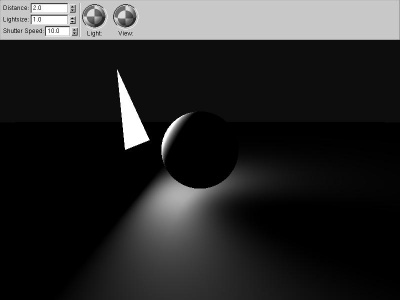

hard_shadow Occluding objects cast shadows by

reducing the solid angle of the light source. The easiest way to handle

this is to shoot a ray from the receiver to the light source's

center--if the ray hits something, the object is "in shadow". This

binary shadowing choice gives clean but unnaturally sharp shadows. If the occluder is also a polygon, you can actually compute

the occluder's solid angle, and subtract that from the source's solid

angle. This only works if the occluder is entirely inside the

light source, from the receiver point; otherwise you have to calculate

intersecting areas. |

|

two_shadow One method to approximate soft shadows is to shoot several "shadow rays", and average the results. This is equivalent to approximating the area light source with several point light sources. |

|

three_shadow Shooting more shadow rays (in this case, three) gives a better approximation of soft shadows, but it still isn't really very good. |

|

random_shadow Using the same three shadow rays, but shooting toward random points on the light source, gives far better results. Random numbers aren't very easy to generate in a pixel shader, so this version repeats a short series, resulting in a rectangular dithering-type pattern. A better approach would probably be to generate a low-correlation random number texture, and look up the random numbers from that. |

|

conetraced_shadow For spherical light sources and occluders, you can use "Conetracing" to estimate the fraction of the light source covered by the occluder. The big advantage to this is you can get analytically-accurate, smooth results while taking only one sample. The disadvantage is it only works nicely for spheres! I once wrote a raytracer using conetracing for everything. Amanatides wrote an article "Ray Tracing with Cones" in SIGGRAPH 1984 expanding how to do this correctly, and Jon Genetti has a paper on "Pyramidal" raytracing as well. |